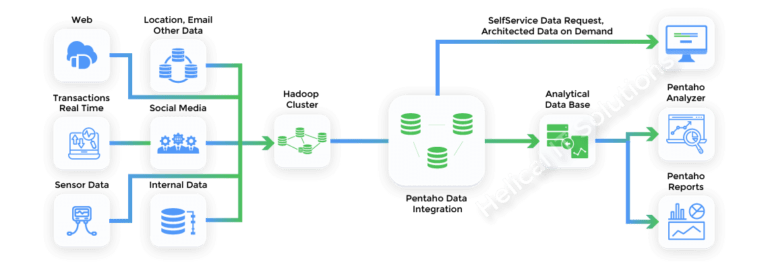

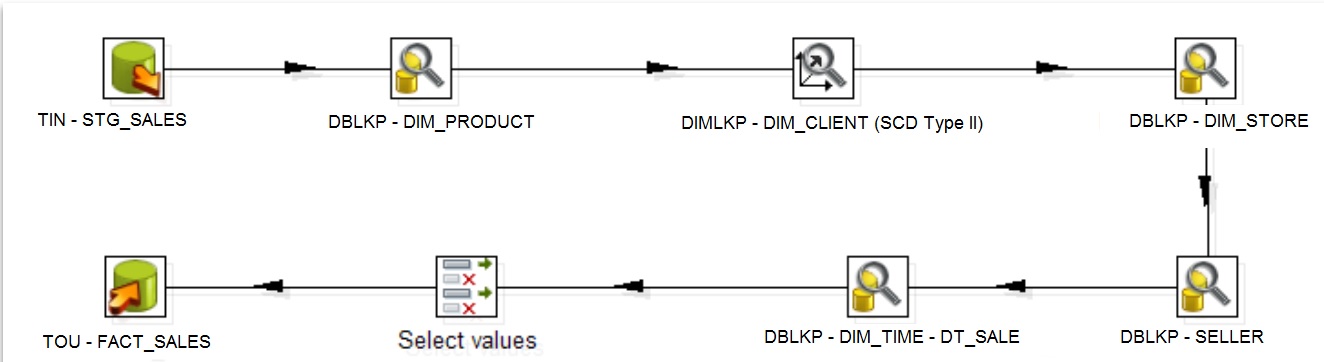

Previously we would set this sorta thing up with DataCleaner's command line interface, which is still quite a nice solution, but if you have more than just a few of these jobs, it can quickly become a mess. With Pentaho you get orchestration and scheduling, and even with a graphical editor.Ī scenario that I often encounter is that someone wants to execute a daily profiling job, archive the results with a timestamp and have the results emailed to the data steward. While DataCleaner is perfectly capable of doing continuous data profiling, we lack the deployment platform that Pentaho has. In this blog post I'll describe something new: Data monitoring with Kettle and DataCleaner. I have a job, which inserts data from Excel files stored in a folder to Mysql table.I capture the Reject trigger and notify the user about the rejected.

Kettle)! Not only is this something I've been looking forward to for a long time because it is a great exposure for us, but it also opens up new doors in terms of functionality. We've just announced a great thing - the cooperation with Pentaho and DataCleaner which brings DataCleaners profiling features to all users of Pentaho Data Integration (aka.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed